The AI chatbot with the biggest context window in 2026 can process thousands of pages of text in a single session — and knowing which tool leads the field could fundamentally change how you handle research, legal work, coding projects, and document analysis.

| Bottom Line:

Google Gemini has the biggest context window for mainstream users in 2026 at 1–2 million tokens. Claude offers 200K standard (up to 1M on higher plans) with the best recall accuracy across the full window. For most professionals, 200K tokens is more than enough for any real-world document task. |

What Is a Context Window and Why Does It Matter?

A context window is the maximum amount of text an AI model can read and work with at one time, measured in tokens (roughly 750 words per 1,000 tokens). Think of it as the AI’s working memory — once you exceed the limit, the model starts forgetting earlier parts of the session.

Why the size matters for real work:

- Analysing an entire legal contract or annual report without losing detail

- Reviewing a complete software codebase in one session

- Maintaining long research conversations without early context being forgotten

- Processing entire books or multi-document research sets simultaneously

→ Which AI chatbot is most accurate for document tasks? →

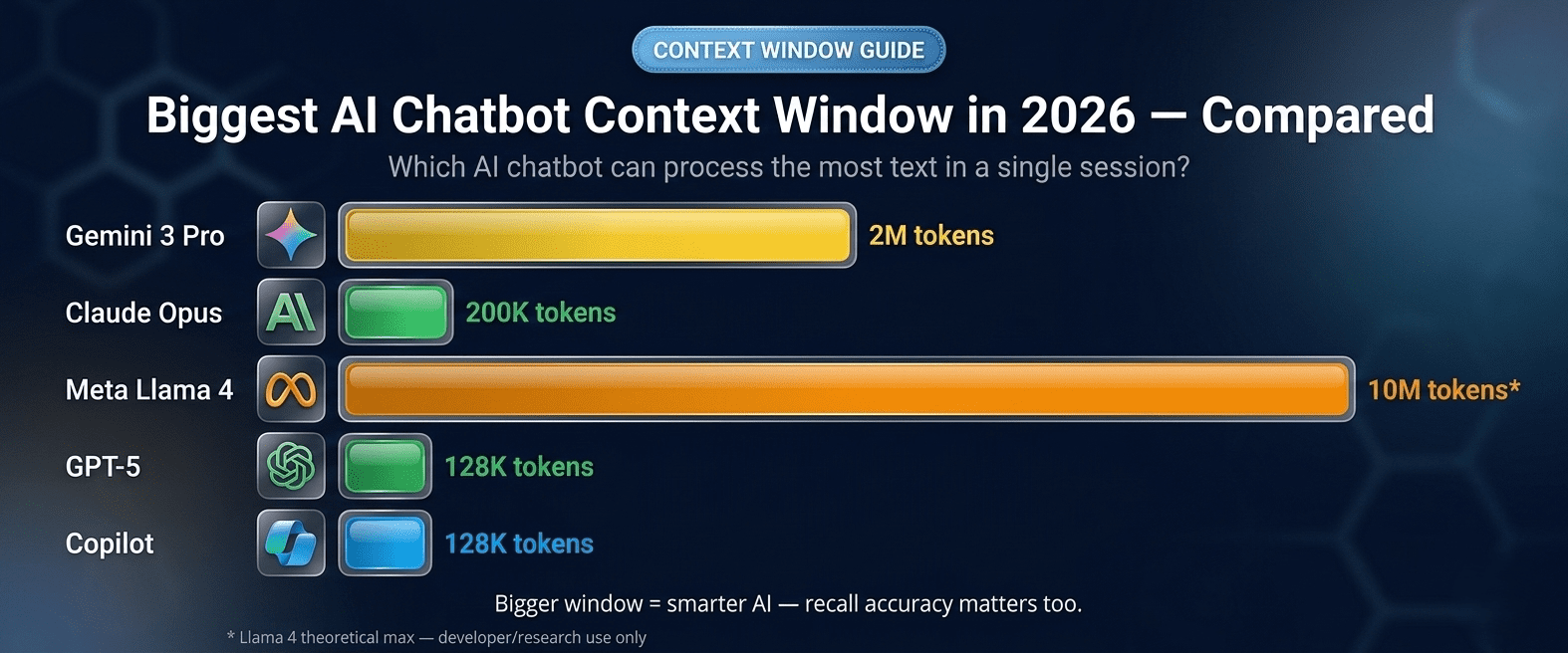

AI Chatbot Biggest Context Window 2026 — Full Comparison

| AI Chatbot | Context Window | Notes |

| Google Gemini 3 Pro | 1M – 2M tokens | Largest standard context window |

| Claude (Anthropic) | 200K standard / 1M beta | Best recall accuracy across window |

| Meta Llama 4 | Up to 10M tokens | Largest theoretical; developer/research use |

| ChatGPT (GPT-5) | 128K – 400K tokens | Reliable but smaller than top rivals |

| DeepSeek R1 | 128K – 164K tokens | Strong reasoning at lower cost |

| Microsoft Copilot | 128K tokens | Tied to GPT-4 architecture |

| Perplexity AI | 128K tokens | Augmented by live web search |

Which Chatbot Has the Biggest Context Window?

1. Google Gemini — Biggest Practical Context Window (1M–2M Tokens)

Gemini 3 Pro offers the largest context window available to mainstream users — 1 to 2 million tokens, equivalent to roughly 1,333–2,666 pages of text. Backed by real-time Google Search, it can supplement its in-context memory with live web data simultaneously.

Best for: Processing entire books, large code repositories, or extensive document sets in one session.

Try it at: gemini.google.com

2. Claude — Biggest Context Window With Reliable Recall (200K–1M Tokens)

Claude offers 200,000 tokens as standard (up to 1M on higher API tiers) — but what sets it apart is recall accuracy across the full window. Claude Opus 4.6 maintains less than 5% accuracy degradation across its entire 200K context, a level most competitors cannot match.

Best for: Legal, research, and analysis work where accurate recall across the full document is essential.

Try it at: claude.ai

3. Meta Llama 4 — Largest Theoretical Context (Up to 10M Tokens)

Meta Llama 4 features the largest theoretically available context window — up to 10 million tokens. Primarily a developer and research model. Free to self-host under Meta’s license.

Explore at: llama.meta.com

The Critical Caveat: Advertised vs. Effective Context

A model’s advertised context window is not the same as its effective context window. Most models become unreliable before their stated maximum — a phenomenon called context degradation, or the ‘lost-in-the-middle’ effect. Claude is consistently ranked best for maintaining reliable performance close to its advertised limit.

→ See overall chatbot accuracy comparison →

What Context Window Size Do You Actually Need?

| Use Case | Recommended Context Size |

| Normal conversations and Q&A | 32K–128K tokens |

| Analysing a long report or contract | 128K–200K tokens |

| Reviewing a full book or large dataset | 200K–1M tokens |

| Full codebase analysis | 1M+ tokens |

| Multi-document research synthesis | 1M+ tokens |

Frequently Asked Questions

Does a bigger context window mean a smarter AI?

No. Context window size and model intelligence are separate qualities. A larger window means the AI can remember more text — it does not make the underlying model more accurate or capable.

Why does context degradation happen?

AI models use attention mechanisms that become harder to compute as context grows. Information in the middle of very long inputs tends to be retrieved less reliably — the lost-in-the-middle effect documented in multiple research papers.

Can I use Claude’s 1M-token context window for free?

No. The 1M-token context is available on higher API tiers only. The standard free and Pro plans offer 200,000 tokens. For all free AI tool options: Free AI chatbots worth using in 2026 →

Final Answer: Which AI Chatbot Has the Biggest Context Window in 2026?

Google Gemini leads on raw window size at 1–2 million tokens. Claude leads on reliable recall accuracy within its window. For most professionals, Claude’s 200K standard is more than sufficient for any real-world document task.