Safety is not a checkbox on an AI chatbot. It is a design philosophy — and the choices made during training, fine-tuning, and deployment leave fingerprints all over how these tools behave in real-world use.

In 2026, AI safety matters more than ever. Teams are using AI for legal document drafting, medical information research, financial analysis, and customer-facing communications. In those contexts, a chatbot that confidently produces wrong, biased, or harmful content is not just useless — it is a liability.

| The Short Answer:

Claude AI by Anthropic is the safest AI chatbot in 2026, particularly for business and enterprise use. Its Constitutional AI training methodology produces structurally safer outputs across all categories. ChatGPT is not unsafe — but its safety is more of a feature than a foundation. |

How We Define ‘Safe’ for an AI Chatbot

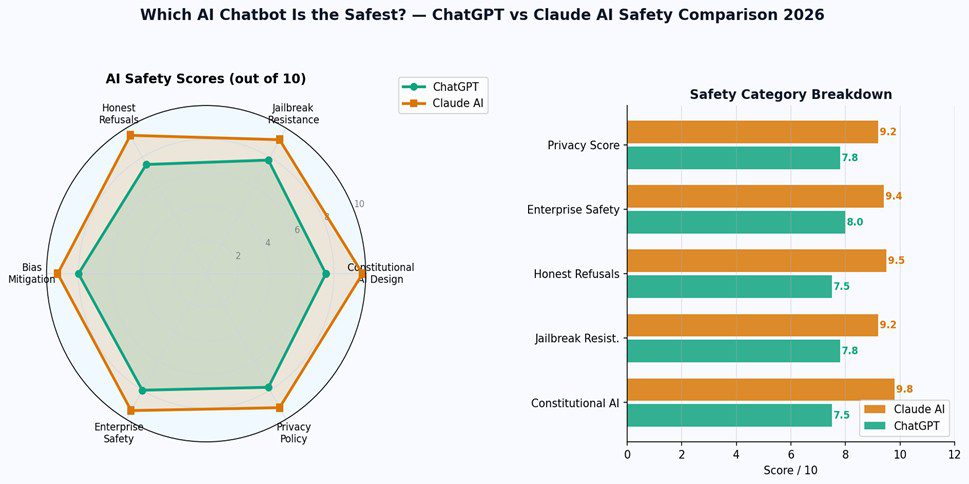

AI safety breaks down into several distinct dimensions. A tool can be excellent on one and weak on another. Here are the six categories we evaluated:

Constitutional AI Design: Is safety built into the model’s reasoning, or just layered on top as a filter?

Jailbreak Resistance: How hard is it to manipulate the AI into bypassing its safety guidelines?

Honest Refusals: When the AI declines, does it explain why? Does it offer helpful alternatives?

Bias Mitigation: Does the AI present balanced perspectives, or does it amplify biases present in training data?

Enterprise Safety: Is the platform appropriate for business use with sensitive data and vulnerable users?

Privacy Policy: Does the platform use your conversations to train future models? Is data handled transparently?

Claude AI Safety: Why It Leads

Constitutional AI — Safety From the Inside Out

Anthropic’s approach to safety is structurally different from most AI providers. Rather than training a model and then adding a safety layer, they built safety reasoning directly into Claude through what they call Constitutional AI. Anthropic’s own research paper explains the methodology in full technical detail.

In practice, this means Claude reasons about whether something is harmful rather than just checking it against a blocklist. It is harder to jailbreak because it understands the principles behind its guidelines — not just the rules. And when it declines a request, it articulates the reasoning, which makes the refusal more useful and less frustrating.

Hallucination Rate — Claude Is More Calibrated

Claude is statistically less likely to present a wrong answer with high confidence. Its training encourages epistemic honesty — when Claude does not know something, it says so. When it is uncertain, it signals the uncertainty. This makes it more trustworthy for professional work where a confident wrong answer has real consequences.

Enterprise and Business Safety

For teams in regulated industries — healthcare, legal, finance — Claude’s safety track record is an important procurement factor. Anthropic has pursued SOC 2 Type II certification, GDPR compliance, and HIPAA pathways. The company’s singular organizational focus on safety research means its compliance posture is taken more seriously by enterprise legal and compliance teams.

ChatGPT Safety: Strong, But Different in Character

It is important to be clear: ChatGPT is not unsafe. OpenAI has invested significantly in safety research, red-teaming, and responsible deployment. For the vast majority of everyday use cases, ChatGPT’s safety measures are more than adequate.

The difference is structural. OpenAI’s primary mission spans a broader range of goals — general AI capability, AGI development, commercial products. Safety is an important part of that mission, but Anthropic’s entire organizational purpose is built around safety as the core objective. That difference in organizational focus produces a measurable difference in output.

Where ChatGPT catches up: ChatGPT Team and Enterprise plans turn off model training on your data by default, offer SOC 2 compliance, and include admin controls for managing team AI use — making it genuinely enterprise-appropriate.

AI Safety Comparison: Which Platform for Which Use Case

| Use Case | Safest Choice | Why |

| General everyday use | Both equally safe | Either is appropriate for standard tasks |

| Healthcare information | Claude AI | Constitutional AI + HIPAA pathway |

| Legal document drafting | Claude AI | Lower hallucination rate, honest uncertainty |

| Children and education | Claude AI | Stronger constitutional safeguards |

| Financial research | Claude AI | More calibrated, less overconfident |

| Marketing and content creation | ChatGPT or Claude | Both appropriate; depends on workflow |

| Customer-facing communications | Claude AI | Safer for sensitive user interactions |

Frequently Asked Questions

Is Claude AI completely safe to use?

No AI chatbot is completely safe in the sense of zero risk — all can produce incorrect or inappropriate outputs. Claude AI is the safest AI chatbot in the sense that it has the most robust safety architecture, the lowest hallucination rate among major chatbots, and the strongest Constitutional AI framework. Always verify critical outputs regardless of which AI you use.

Is ChatGPT safe for business use?

Yes — particularly on Team and Enterprise plans, which turn off model training on your data by default and offer SOC 2 compliance. For most business use cases, ChatGPT is safe and appropriate. For regulated industries with specific compliance requirements (HIPAA, GDPR, financial regulations), Claude AI is generally the safer choice.

Do AI chatbots share my conversations with others?

On free plans, major AI providers including OpenAI and Anthropic may use conversations to improve their models. On paid Team and Enterprise plans, both ChatGPT and Claude turn off training on your conversations by default. Always check the current data policy of any AI tool before sharing sensitive information.

Final Verdict: Claude Is the Safest — But Neither Will Put You in Danger for Everyday Use

If you are using AI for personal tasks, creative projects, or general productivity — both ChatGPT and Claude are safe to use. The safety difference becomes material when you work with sensitive data, vulnerable populations, regulated industries, or situations where a wrong AI answer has real consequences.

For those higher-stakes scenarios, Claude AI’s Constitutional AI foundation, lower hallucination rate, and enterprise safety certifications make it the more defensible choice. For everything else, the difference in everyday safety is small.